To do this, we really took advantage of the Cinemachine functionality in Unity. We built a suite of vr camera recording and editing software that integrated with Cinemachine and the Timeline, so that Eric could shoot the cinematography directly in a vr headset. What was great about Unity is that the resulting toolset was so easy to use that Eric did all the camerawork for the 2d version himself.

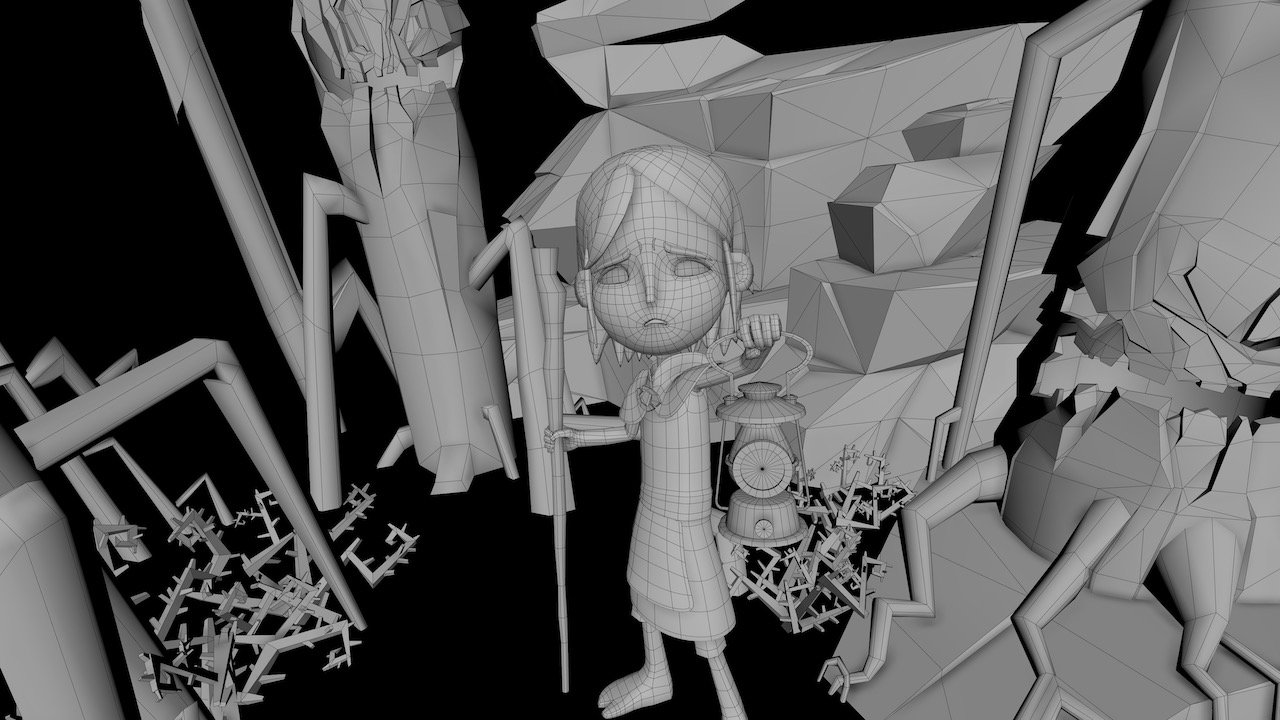

Images © Baobab Studios, “Baba Yaga” 2020

Unity has been central to all of our vr projects dating back to Asteroids! in 2016. Over the course of five interactive vr projects, we have evolved our real-time Storyteller platform on top of Unity. Storyteller has become a comprehensive and pioneering toolset for creating real-time animation at film quality, both to author interactive vr narratives and to reinvent traditional animated film production. We could not have built this toolset without Unity as the backbone.

Larry Cutler: Unity is the game engine that we use to render our scenes in Baba Yaga in real time at 72 fps for mobile vr. While we still model, rig, and animate in Maya, everything else is authored in Unity. We create our non-linear narrative structure in Unity. The stylized vr storybook look was all crafted in Unity, as were the interactivity, character AI, effects, and lighting.

We treat all of our projects as an experiment. As a result, each of our vr narratives has pushed our use of Unity in different directions, and has broadened and strengthened our Storyteller platform over time. Unity has been a flexible foundation for all of these experiments and the resulting projects.

Our entire 2d version of Crow was rendered in real time in Unity. The results exceeded our expectations, and Crow went on to win four Emmy Awards in 2019. Notably, this was the first time that a real-time rendered project won Emmys for outstanding artistic achievement in animation (for both production design and character design) while competing with traditional animated projects, which had much more render time and resources at their disposal.

Bonfire is a highly interactive experience and the project is designed to be experiential. The viewer needs to build a meaningful relationship with the alien Porkbun. We created a brand-new system for authoring this completely non-linear experience. We also developed more advanced emotive character AI systems for the alien Porkbun and the viewer’s sidekick Debbie. Bonfire was a launch partner for the [vr headset] Oculus Quest, so we needed to extend our system to be able to render everything with a mobile phone chipset.

In our series Tools Of The Trade, industry artists and filmmakers speak about their preferred tool on a recent project — be it a digital or physical tool, new or old, deluxe or dirt-cheap. This week, we speak to Larry Cutler about Unity. Cutler is co-founder and CTO of Baobab Studios, the multi-Emmy-winning vr producer. The studio’s most recent title is the fantasy experience Baba Yaga (image at top), which is nominated for two Annies this year. Below, Cutler speaks to us about the central role played by Unity in Baobab’s pipeline:

Crow: The Legend presented many new challenges for our team, as it was significantly more ambitious across all aspects of vr filmmaking. So, we really built out our artist toolsets to handle the increased scale of production. We decided it was important to tell the story of Crow both in vr and for traditional cinema (2d). To do this, we created a 2D Film toolset: our software framework for crafting different versions of a real-time project across multiple mediums simultaneously.

Baba Yaga represents the next step forward in terms of interactivity, vr storytelling, and above all making sure the viewer matters to the characters and story. Baba Yaga pushed our toolset by making the viewer the main character in the story: your choices truly matter and change the experience in dramatic ways. Baba Yaga also required us to innovate with its hand-crafted, theatrical art style as well as its expressive and nuanced character animation.

We decided early on in the production of Baba Yaga to create a cinematic, theatrical version too. We made many improvements to our 2D Film toolset based on our experiences of creating the theatrical version of Crow: The Legend. Our director Eric Darnell wanted to shoot the cinematography himself in Unity, to tell the 2d version of Baba Yaga from an emotional first-person POV.